I've started to notice that the biggest challenges in AI are not always about intelligence. They are about coordination. Models are becoming more capable each year, but they rarely operate in isolation anymore. One system generates signals; another executes decisions; a third evaluates outcomes. These pieces interact constantly, yet the infrastructure connecting them often feels improvised. That is the backdrop I keep in mind when I look at Mira Network and its vision for AI interoperability.

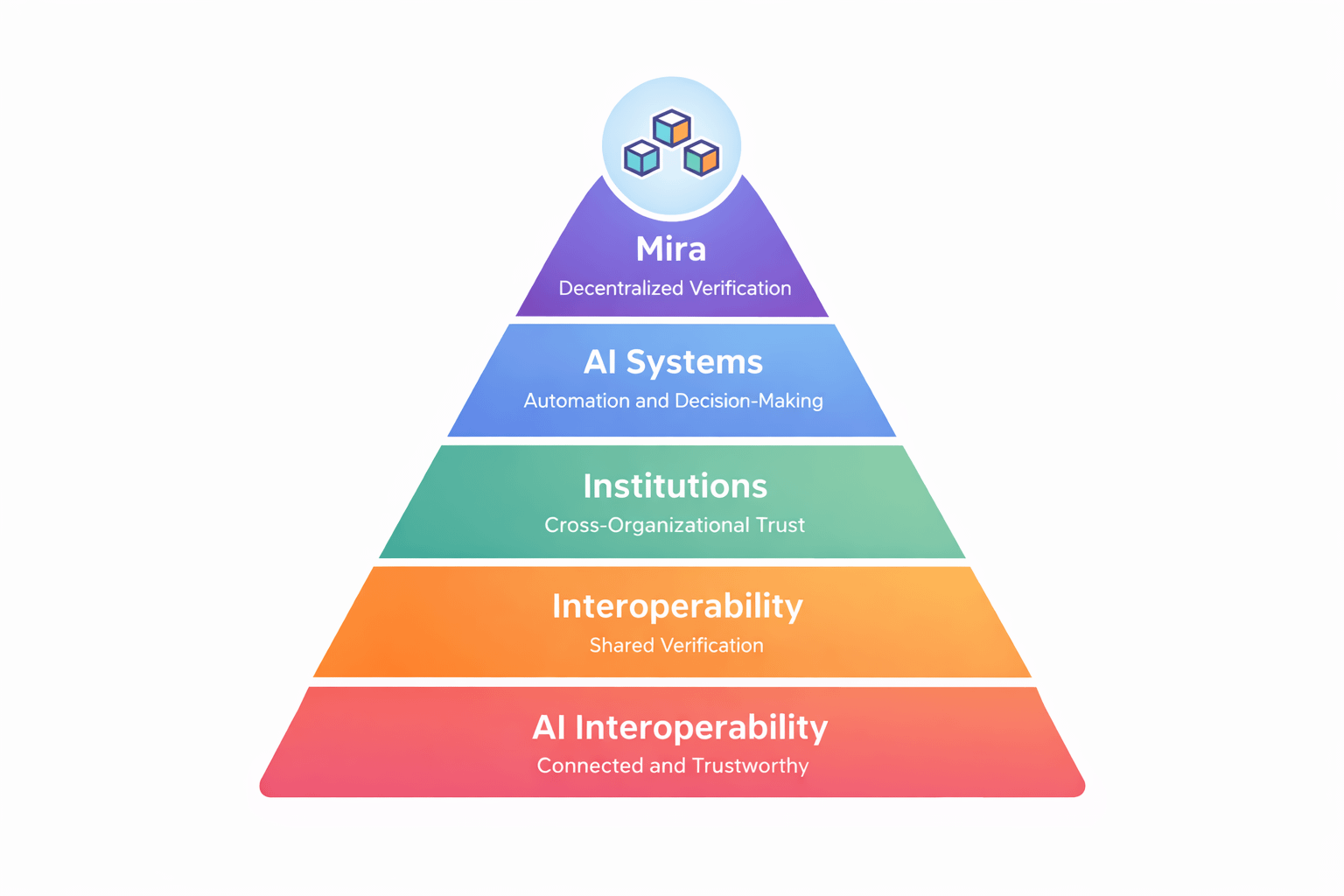

Interoperability sounds like a technical problem at first. Different models need compatible interfaces. Data formats need to align. Systems need to communicate reliably. These are real issues, but what I have learned over time is that the deeper challenge is trust. When multiple AI systems interact across organizational boundaries, each participant needs confidence that the others have behaved as expected. Without that assurance, coordination becomes fragile.

Centralized platforms attempt to solve this by acting as the source of truth. One company runs the infrastructure, logs every action, and provides explanations when something goes wrong. That arrangement works reasonably well inside closed ecosystems. But as AI spreads across industries, relying on a single operator to verify everything begins to look less comfortable. Interoperability across organizations requires verification that does not belong to any single participant.

That is where Mira’s approach begins to stand out to me.

Rather than focusing on building AI models or optimizing inference performance, Mira concentrates on verifying claims about AI behavior. Inputs, constraints, execution context, and outputs can be recorded and validated through decentralized infrastructure. In a world where AI systems increasingly depend on one another, that verification layer could become the connective tissue that allows them to cooperate.

From my perspective, this shifts interoperability from a purely technical question to an institutional one. It is not just about whether systems can communicate. It is about whether they can rely on each other’s records.

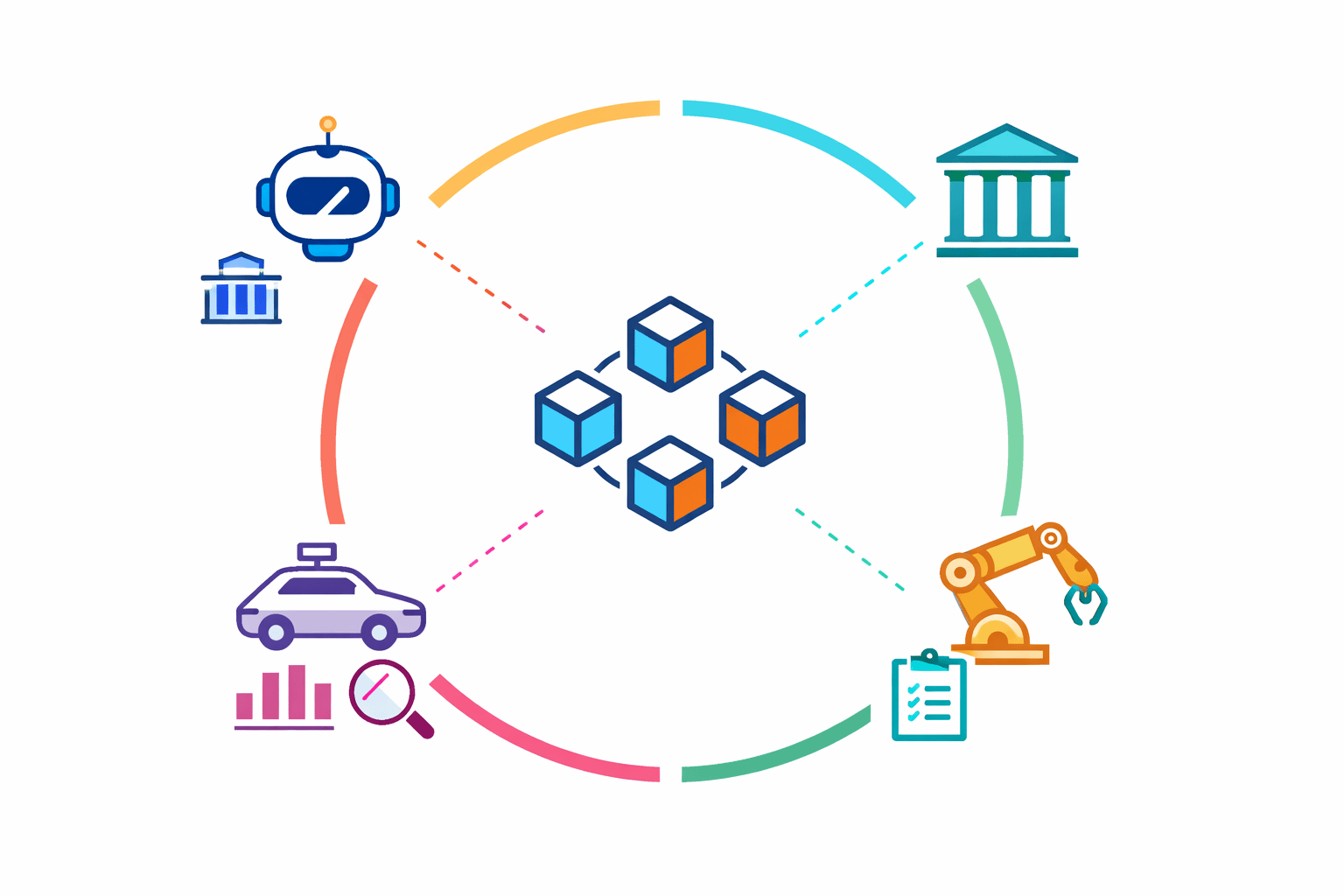

Imagine an ecosystem where multiple AI agents coordinate across financial services, logistics, and infrastructure management. Each system operates independently but occasionally triggers actions that affect others. If those actions are recorded and verified through a shared layer, interoperability becomes less fragile. Systems do not need to trust the operator behind another AI model. They need to trust the verification process around it.

Of course, I am careful not to assume this solves everything.

Verification networks introduce their own complexities. Validators must remain aligned with the network’s incentives. Data formats need to remain standardized. Integration must remain simple enough that developers do not abandon the process when speed becomes critical. Interoperability frameworks often struggle not because they lack ambition, but because they impose too much friction on participants.

Another challenge is diversity. AI systems are deployed across radically different environments—from autonomous trading strategies to healthcare diagnostics to robotics coordination. A verification layer that works well in one domain might struggle in another. Mira’s ability to support interoperability depends on how adaptable its infrastructure becomes as these different use cases emerge.

What I find compelling is that Mira’s vision does not rely on central orchestration. Instead of creating a single hub that every AI system must connect to, it attempts to provide a shared mechanism for verifying what systems claim to do. That approach feels more compatible with the decentralized reality of AI development today, where no single entity controls the entire ecosystem.

Still, infrastructure rarely proves itself through vision alone. It proves itself through adoption patterns. If developers begin integrating verification into their systems as a default step—much like logging or authentication today—that is when interoperability begins to stabilize. If verification remains optional or cumbersome, the ecosystem will likely continue relying on fragmented trust models.

For now, I see Mira’s role as exploratory. It is testing whether decentralized verification can become the connective layer between independent AI systems. The promise of a connected AI landscape depends less on intelligence breakthroughs and more on whether systems can coordinate without constantly renegotiating trust.

Whether Mira becomes part of that connective infrastructure is something time will reveal. Interoperability often emerges quietly, once enough systems begin depending on the same underlying rails. Until then, the vision remains plausible but unfinished, waiting to see whether verification can scale alongside the intelligence it is meant to support.