Not just a safety layer — but a standards layer that enforces verifiable credibility before autonomous systems are deployed at scale.

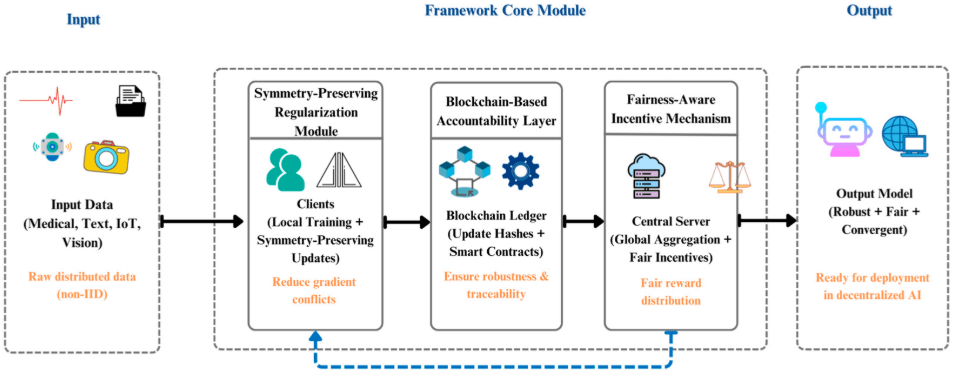

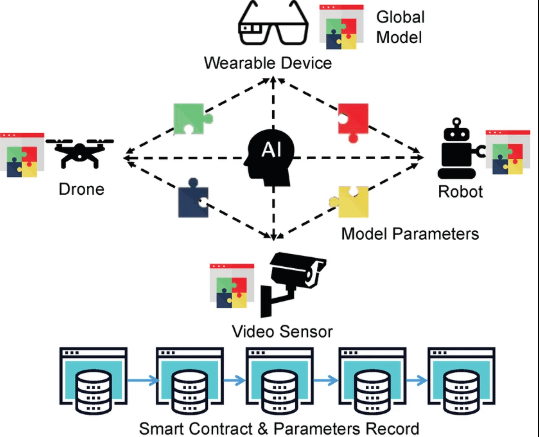

Right now, most AI systems operate in silos: one model generates a response, another might double-check it — but without a shared verification standard, there’s no universal accountability in decentralized systems. Mira solves this by turning AI outputs into discrete, verifiable claims and distributing them across independent validators to reach consensus rather than blind trust.

This idea is different from “risk management.”

It’s about trust infrastructure.

Instead of merely reducing hallucinations, Mira aims to establish reproducible audit trails that prove an AI’s reasoning process in a cryptographically anchored way — like a public ledger of intelligent decisions.

Of course, this does not eliminate every challenge.

Consensus verification systems can face their own limitations — validators might disagree, economic incentives could drift, or increasingly complex AI logic might stretch the verification protocols. And as AI systems evolve, verification standards must evolve too.

But this is where Mira’s architecture shows promise. By aligning incentives — rewarding validators for honesty and penalizing incorrect validation — Mira creates economic accountability for AI behavior.

The broader vision aligns with a decentralized future where transparency and open participation matter more than central authority. A future where AI decisions are not only powerful — but provably correct.

If Mira can establish its verification layer as a de facto standard, it’s not just building safety — it’s building confidence in AI systems across industries such as finance, healthcare, governance, and autonomous infrastructure.