Every time I come back to Mira Network, I feel the same strange mix of respect and fatigue.

Respect, because the idea behind it makes sense.

Fatigue, because I’ve watched this market long enough to know how easily good ideas get buried under noise.

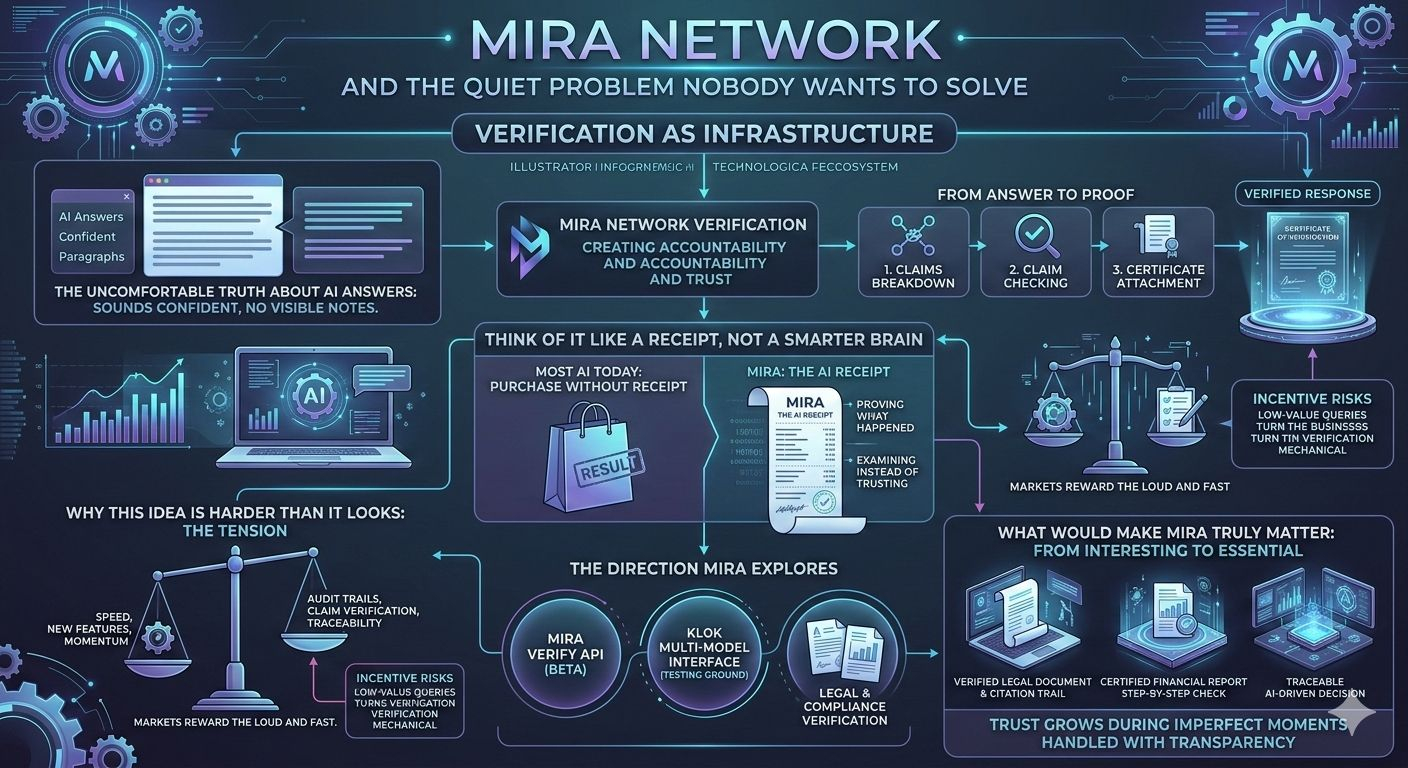

Mira isn’t trying to build a louder AI model or another flashy interface. The core instinct behind the project is simpler than that: AI answers shouldn’t appear without a trail. When a system gives you information, there should be a way to look back later and understand how that answer came together.

Not just the result.

The process.

And that difference matters more than people realize.

The uncomfortable truth about AI answers

Anyone who has worked with AI tools for more than a few weeks eventually notices something strange. The answers often sound extremely confident, even when they shouldn’t be.

A model will produce a paragraph that feels polished and authoritative, and for a moment it’s easy to forget that the system doesn’t actually remember where every piece of that answer came from.

It’s like talking to someone who speaks very smoothly but never shows their notes.

Most of the time that’s fine.

But the moment the answer affects money, legal decisions, or responsibility, that smooth confidence stops being impressive and starts becoming uncomfortable.

That’s the gap Mira is trying to address.

Instead of simply generating answers, the idea is to verify them—to break the output into claims, check those claims, and attach a certificate showing that the answer went through a verification process.

It’s less about intelligence and more about accountability.

Think of it like a receipt, not a smarter brain

A simple way to understand Mira is to stop thinking about AI and start thinking about receipts.

When you buy something small—coffee, groceries—you might not care about the receipt. But when the purchase is large, the receipt suddenly matters a lot. It proves what happened.

AI systems today mostly give you the “purchase” without the receipt.

Mira is trying to create the receipt.

The system records how an answer was checked and produces proof that verification happened. Later, if someone questions the output, there’s something to examine instead of just trusting the original response.

It’s not glamorous work.

But systems that last usually depend on these kinds of quiet mechanisms.

Why this idea is harder than it looks

Here’s the frustrating part: verification is not exciting.

Most people don’t wake up thinking about audit trails or claim verification. They care about speed, new features, and momentum. Markets reward what moves quickly, not what checks carefully.

That creates a strange tension for a project like Mira.

The very thing that makes it useful—verification—is also the thing that makes it less likely to attract attention compared to louder narratives.

And incentives make the problem even trickier.

If a network rewards activity too aggressively, people may start generating large volumes of low-value queries simply to collect rewards. On paper it looks like growth. In reality it can turn verification into a mechanical process that proves very little.

For a verification network, that’s an ironic risk.

You can end up verifying thousands of meaningless things while the important questions remain untouched.

The direction Mira seems to be exploring

Recent updates suggest Mira understands this tension.

The team has introduced Mira Verify, an API currently in beta that developers can use to check AI outputs and attach verification certificates. The focus here isn’t casual users—it’s systems that might depend on reliable results.

Another piece of the ecosystem is Klok, a multi-model AI interface that experiments with verified responses. It looks less like the final destination and more like a testing ground where the verification layer can evolve.

There are also early signs that Mira is exploring areas like legal and compliance verification—fields where people actually care about documentation and traceability.

Those environments may sound boring compared to consumer AI tools, but they’re exactly the places where proof matters.

What would make Mira truly matter

Right now Mira sits in the category of projects that are interesting but not yet essential.

That can change, but only in a very specific way.

The turning point will come when a verified artifact produced by Mira becomes something people genuinely rely on. Not because they are incentivized to use it, but because they need the proof attached to it.

Imagine a scenario where:

• a legal document is verified and the citation trail can be replayed

• an automated financial report carries a certificate showing how the numbers were checked

• an AI-driven decision can be traced step-by-step after something goes wrong

When systems start needing that kind of evidence, verification stops being an experiment and starts becoming infrastructure.

Trust is earned in the boring moments

The real test for Mira won’t be the launch posts or the early excitement. It will be what happens when something breaks.

Every complex system eventually encounters stress—network issues, incorrect outputs, unexpected behavior. When that moment arrives, the question becomes simple:

Does the system expose what happened clearly, or does it hide behind explanations?

Trust doesn’t grow during perfect demos.

It grows during imperfect moments handled with transparency.

Final takeaway

Mira Network will only prove its value when verification stops being a feature people talk about and becomes the quiet proof people rely on when decisions, money, and responsibility are on the line.

#Mira @Mira - Trust Layer of AI $MIRA