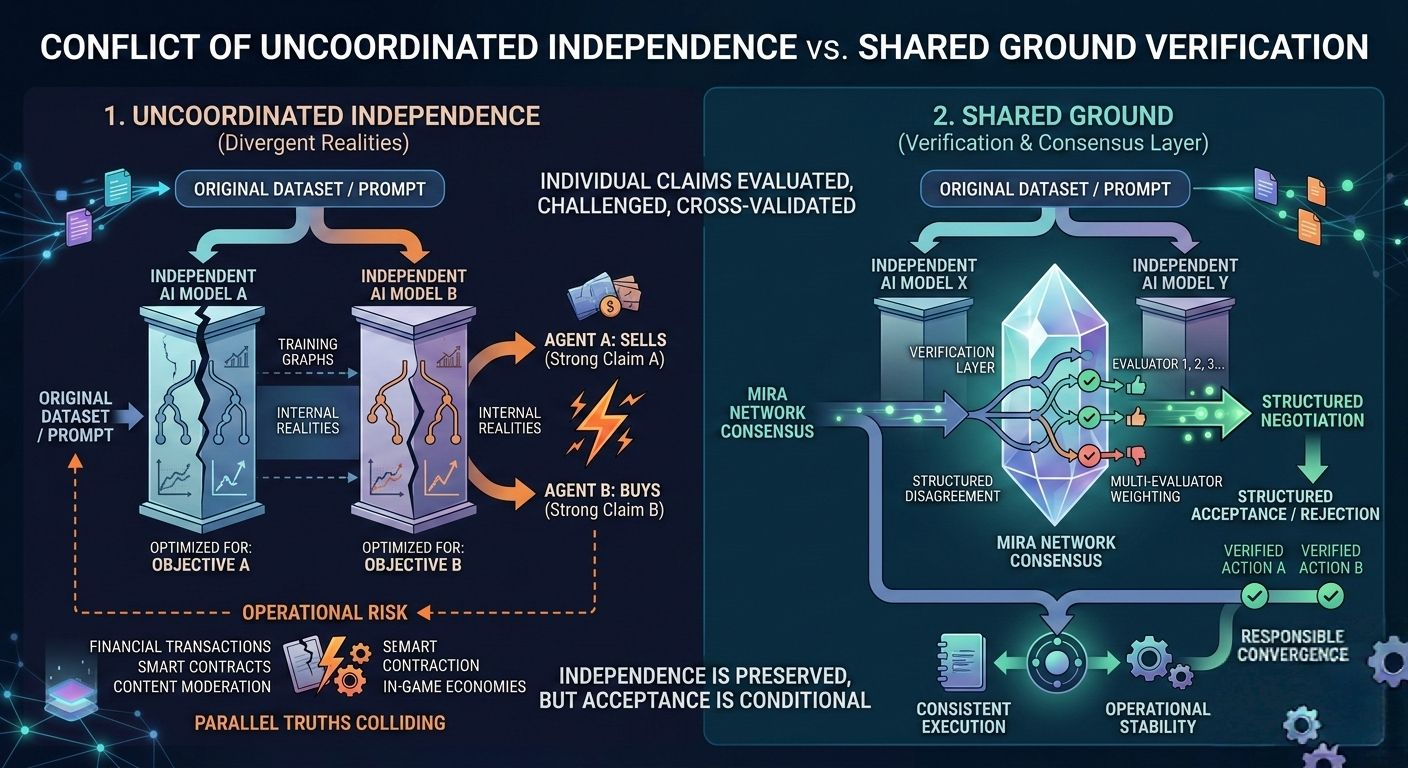

When I first started looking very closely at @Mira - Trust Layer of AI , what stood out wasn’t decentralization in the usual sense. It was divergence. In AI today, two models trained on different data, optimized with different objectives, can look at the same prompt and produce fundamentally different interpretations. Both can sound coherent. Both can appear confident. Yet they may be operating on entirely separate internal “realities.”

The idea that really clicked for me was that independence, without coordination, can amplify fragmentation. We often celebrate model diversity as resilience. But when autonomous agents begin making financial decisions, executing smart contracts, moderating content, or running in game economies, divergence isn’t philosophical. It becomes operational risk.

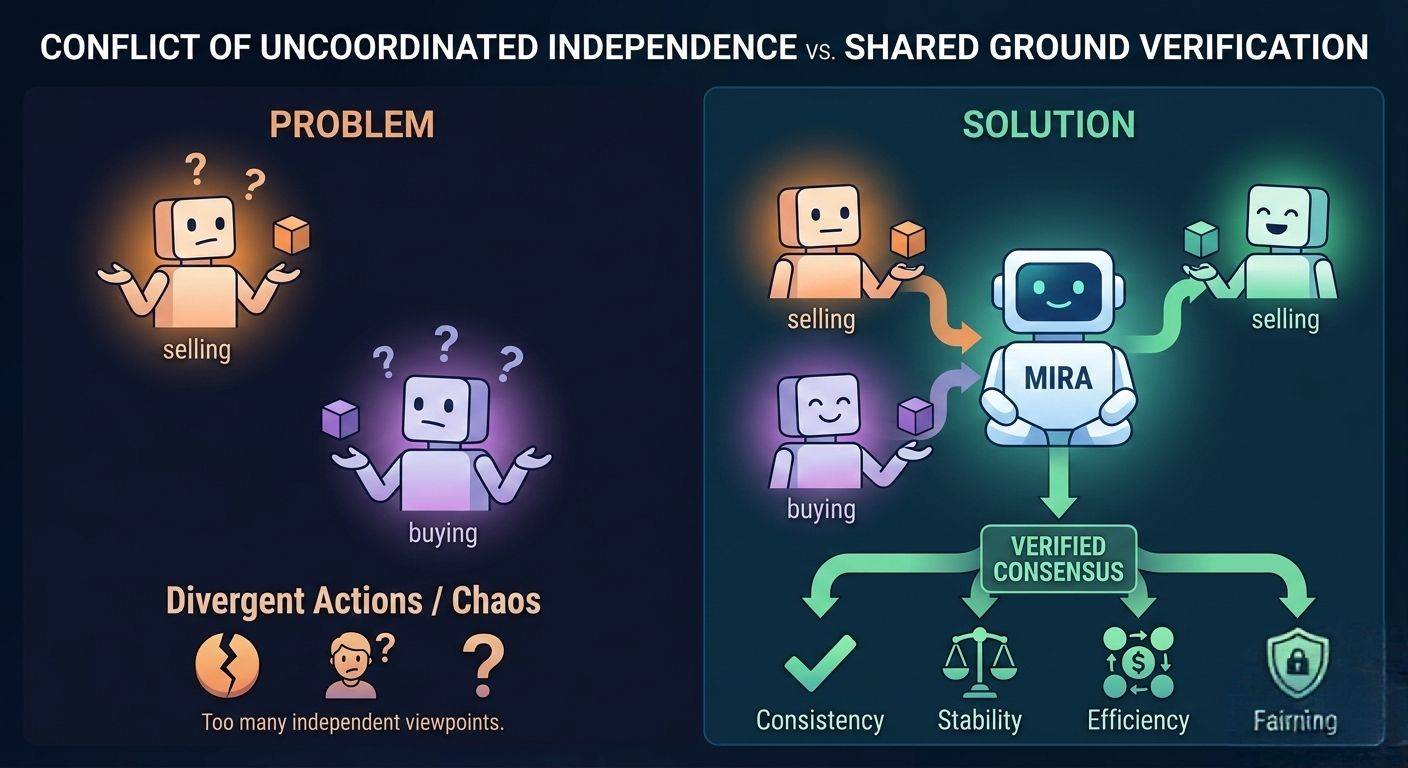

Mira’s approach reframes this problem. Instead of assuming that one model’s output should be accepted as sufficient, it introduces a verification and consensus oriented layer around AI claims. Independent systems can generate outputs, but those outputs can be evaluated, challenged, and cross-validated through structured mechanisms anchored on-chain. In other words, independence is preserved, but acceptance is conditional.

This matters more than it first appears. In a world of AI agents interacting with other AI agents, reality is no longer just human defined. If one agent interprets a dataset one way and another reaches a contradictory conclusion, which one triggers a transaction? Which one governs a DAO proposal? Which one controls a game asset? Without shared verification, you get parallel truths colliding in real time.

What impressed me about Mira is that it doesn’t try to eliminate divergence. It acknowledges it. The network creates space for multiple evaluators and verifiers to weigh in before a claim is finalized. That design feels less like forcing uniformity and more like building a structured negotiation between machines.

Stepping back, this feels deeply human. Our institutions already work this way. Courts have opposing counsel. Academic research has peer review. Markets have price discovery across participants with conflicting views. Mira brings a similar logic to AI native systems: truth is strengthened through structured disagreement, not blind acceptance.

In practical ecosystems, this has clear implications. AI powered trading agents can be required to pass verification thresholds before executing large transactions. Autonomous research tools can log validation trails before publishing conclusions. In gaming or virtual environments, AI driven events can be checked for consistency and fairness before affecting user assets. These are not abstract scenarios. They are emerging use cases where divergent AI realities can directly impact real people.

Of course, there are tradeoffs. Coordination layers introduce latency. Verification mechanisms can increase computational overhead and cost. And there is a delicate balance between healthy divergence and bureaucratic gridlock. Too much friction, and innovation slows. Too little, and chaos seeps in.

But what I appreciate is the philosophical stance embedded in Mira’s design. It assumes that the future will not be dominated by a single, unified AI perspective. Instead, we’ll live among many independent systems, each with its own biases and training histories. The challenge isn’t to force them into uniformity. It’s to build infrastructure that helps them converge responsibly when it matters.

If Mira succeeds, most users won’t think about conflicting model interpretations or verification rounds. They’ll simply notice that AI powered systems behave consistently. Transactions won’t execute on wildly different assumptions. Virtual worlds won’t fracture because two agents disagreed about the rules. The blockchain won’t be the headline; it will be the quiet referee ensuring shared ground.

And if that happens, divergence won’t feel like a threat. It will feel like diversity operating within guardrails. The network will fade into the background, like electricity stabilizing a city we barely think about.

That might be the most human strategy of all.