I’ve noticed that most projects at the intersection of AI and blockchain tend to compete on scale. Faster training networks, larger model marketplaces, or more powerful compute distribution.

Those are interesting directions, but they often circle around the same assumption: that the value of decentralized AI lies primarily in producing intelligence. When I started looking at Mira Network, what caught my attention was that it approaches the problem from a different angle.

Instead of focusing on generating intelligence, Mira appears more concerned with verifying it.

That distinction may sound subtle, but I think it changes the conversation.

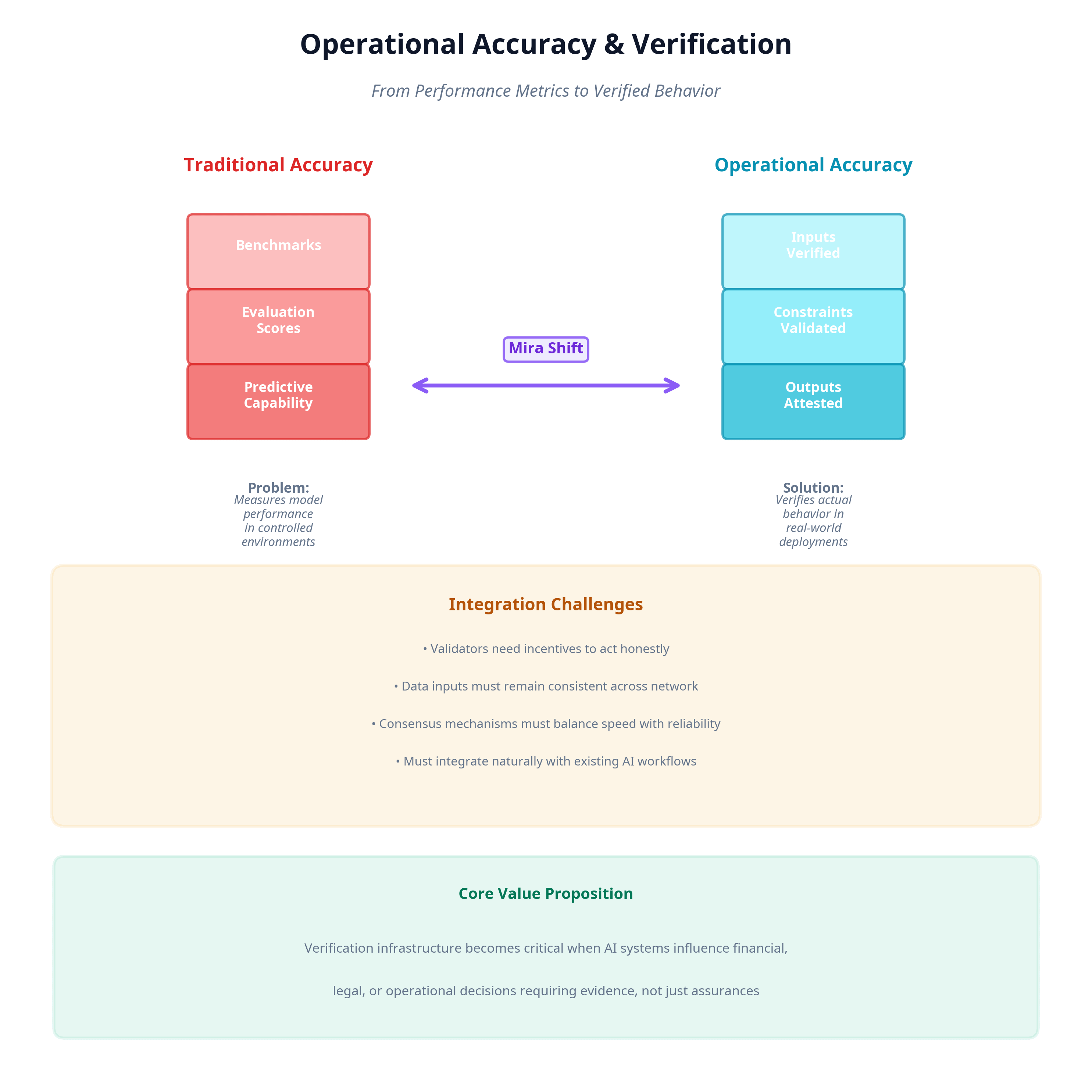

Accuracy in AI systems is often discussed in terms of model performance benchmarks, evaluation scores, or predictive capability.

But operational accuracy is something else entirely. It’s about whether a system behaved within defined parameters when it actually performed a task.

As AI systems begin influencing financial transactions, infrastructure decisions, and automated workflows, that kind of verification becomes increasingly important.

From my perspective, Mira’s infrastructure attempts to sit exactly in that space.

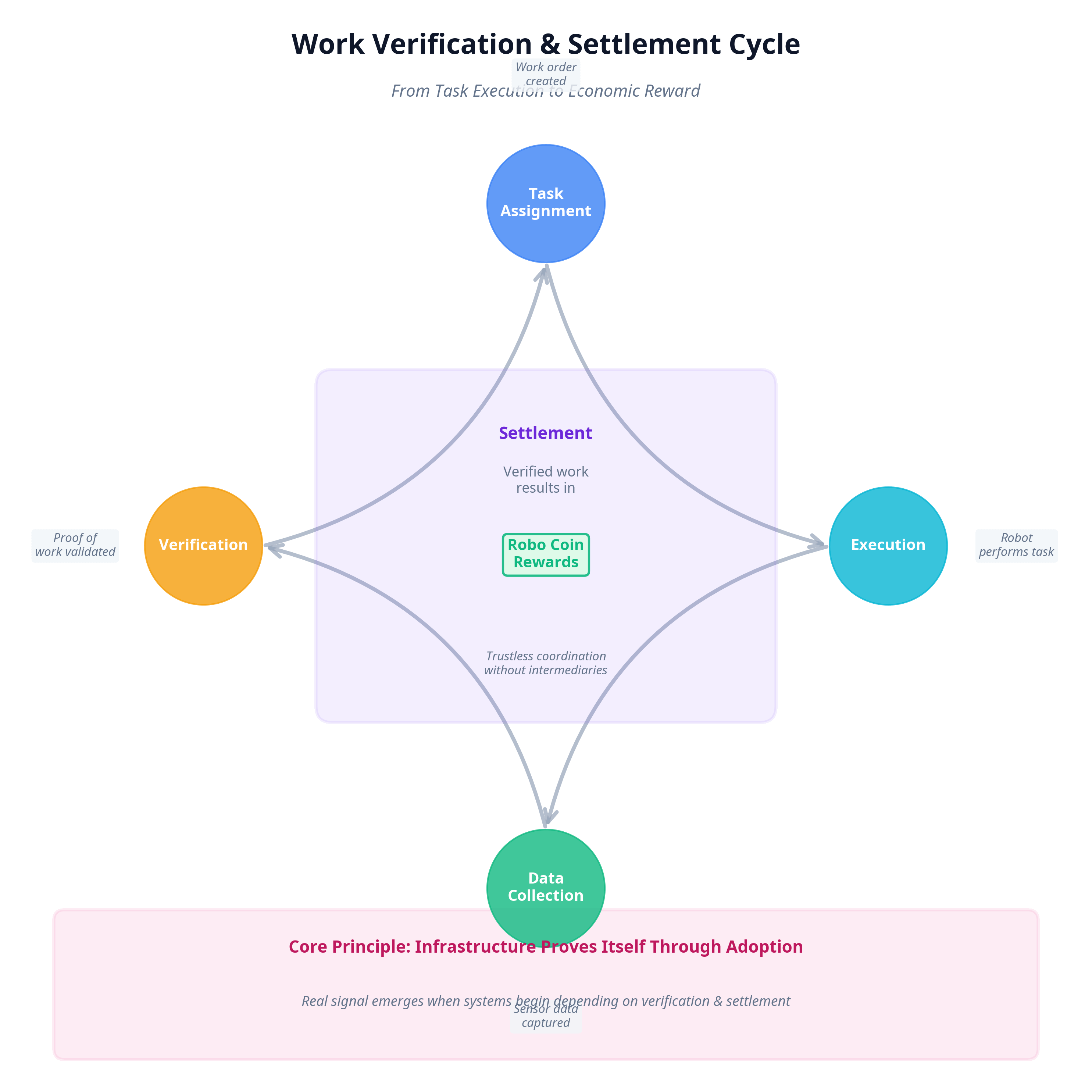

Rather than competing with AI model developers, the network focuses on validating claims about AI activity. Inputs, constraints, execution context, and outputs can be recorded and verified through decentralized infrastructure.

In theory, that creates a shared reference point for understanding what an AI system actually did. The model still performs its work, but the verification of that work doesn’t rely entirely on the operator running it.

I find that separation interesting because centralized AI systems typically combine both roles. The same organization builds the model, deploys it, records its actions, and explains its behavior after the fact.

That arrangement works as long as trust in the operator remains strong. But as AI systems interact with more stakeholders financial institutions, regulators, external partners that centralized trust model begins to look fragile.

Mira’s emphasis on decentralization seems designed to address that fragility.

Instead of relying on a single authority to maintain records, verification can occur across a distributed network. The advantage, at least conceptually, is that no single participant controls the full narrative of what happened.

For organizations that need verifiable evidence rather than assurances, that structure could provide a different level of confidence.

Still, I try not to assume that decentralization automatically improves accuracy.

Distributed verification introduces its own challenges. Validators need incentives to act honestly. Data inputs must remain consistent across the network. Consensus mechanisms must balance speed with reliability.

If any of those components fail, the system risks producing conflicting or delayed records problems that centralized systems, for all their weaknesses, often avoid.

Another factor I keep thinking about is integration. Infrastructure only becomes meaningful when other systems begin depending on it.

AI developers already operate in complex environments filled with monitoring tools, logging systems, and compliance frameworks. For Mira’s verification layer to matter, it needs to fit naturally within those existing workflows rather than adding excessive friction.

At the same time, I can see why the focus on accuracy and verification might become more valuable over time. AI systems are moving beyond experimental environments into sectors where decisions carry financial, legal, or operational consequences.

In those contexts, explanations alone rarely satisfy stakeholders. Evidence becomes necessary.

Mira’s architecture appears to treat that evidence as infrastructure rather than an afterthought.

Whether that focus becomes a true competitive edge remains uncertain. Many technologies promise better verification in their early stages, only to struggle when faced with real operational complexity.

But the underlying problem Mira addresses the difficulty of establishing trust in autonomous systems is unlikely to disappear.

For now, what I see is an infrastructure experiment that shifts the AI conversation away from intelligence itself and toward accountability.

If that shift continues to resonate with developers and institutions, Mira’s emphasis on decentralized verification may become more relevant over time.

And if it doesn’t, it will still serve as an interesting reminder that in complex technological ecosystems, the systems that verify outcomes can sometimes matter as much as the systems that produce them.