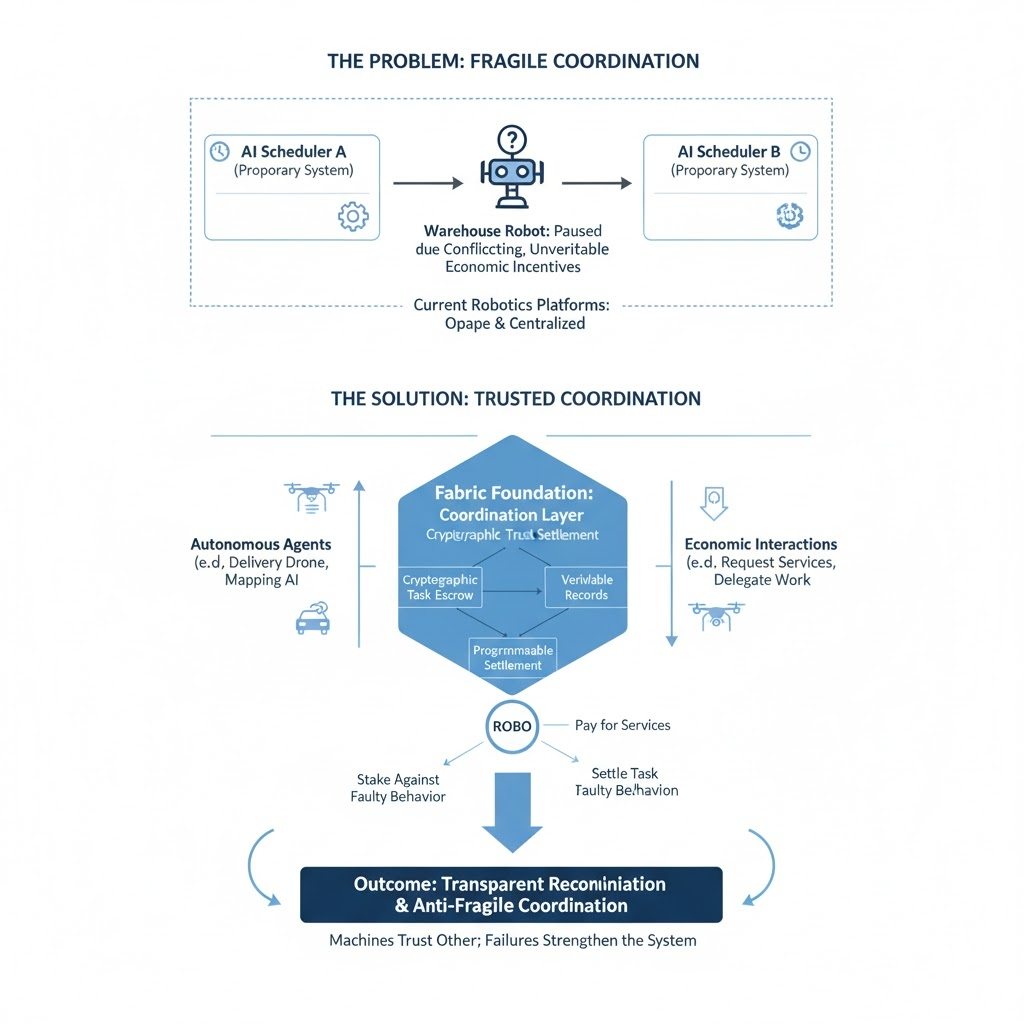

I noticed something odd while reading a robotics incident report late one night. A warehouse robot had paused in the middle of a task because two separate AI schedulers issued conflicting instructions. No command was broken or illegal, but rather the robot simply did not have a way to validate which command was providing it with a legitimate economic incentive.

This demonstrates a major concept that really hit me as I think about robotic systems acting autonomously, the biggest problem is not the intelligence or technology of the robots that are acting autonomously, but rather how machine systems will coordinate and operate in an uncertain environment. Machines can execute tasks perfectly, but they still struggle to determine whose instruction they should economically trust.

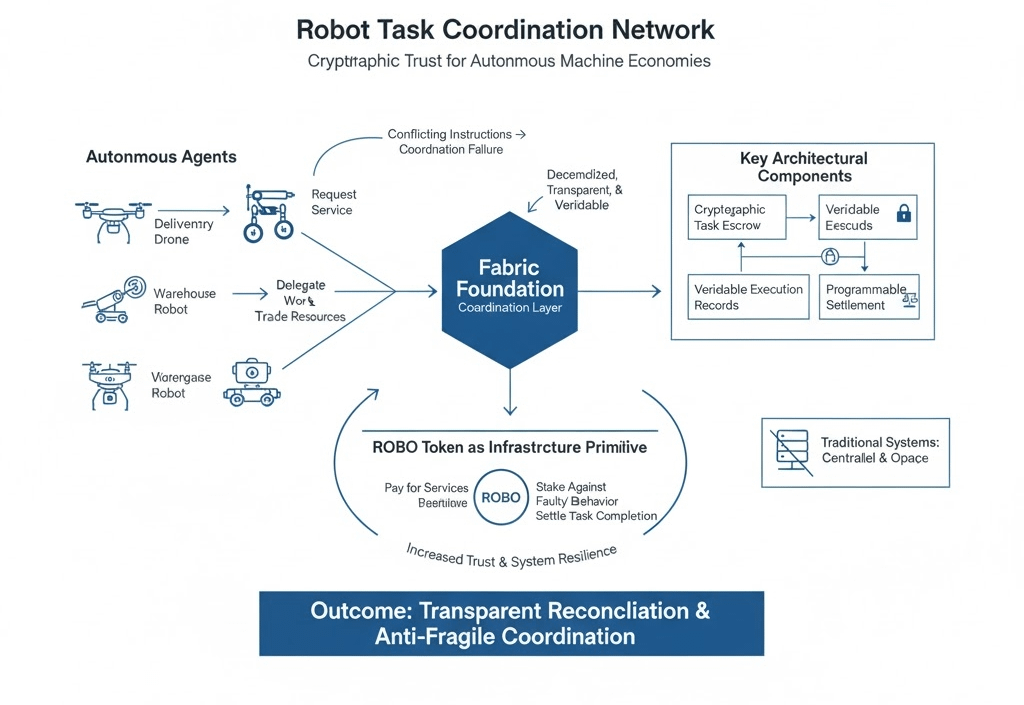

The problem becomes clearer when you look at how most robotics platforms operate today. Decisions, logs, and task histories are locked inside proprietary systems, which means verification rarely exists outside the operator that controls the robot. Once machines start interacting across networks requesting services, trading resources, or delegating work, those closed environments become fragile coordination points.

I often imagine a near future logistics ecosystem where machines negotiate tasks the way markets negotiate prices. A delivery drone might request loading services from a warehouse robot, while a mapping AI sells navigation updates to autonomous vehicles moving through the same region. In that world, robots are no longer just tools, they become economic actors.

The infrastructure problem emerges quickly. Traditional systems rely on centralized schedulers or private databases to resolve disputes, but machine economies generate interactions too quickly and across too many participants for that model to scale. Without transparent reconciliation layers, autonomous agents can transact, but they cannot independently verify the legitimacy of the outcomes.

This is why some of the architecture around @Fabric Foundation has been interesting to watch. Instead of treating robotics purely as a software challenge, the system frames coordination itself as the missing layer, using cryptographic task escrow, verifiable execution records, and programmable settlement to allow machines to confirm that work actually occurred.

Within that environment, #ROBO start to look less like speculative assets and more like infrastructure primitives. If robots need to pay for services, stake against faulty behavior, or settle task completion autonomously, they require a neutral medium of exchange that software agents can interact with without relying on centralized intermediaries.

What intrigues me most is the idea that such systems could become anti fragile. Markets inevitably introduce volatility, outages, and unexpected behavior, but protocols designed around verification allow failures to surface transparently rather than remain hidden inside opaque platforms. Each failure becomes information that strengthens the coordination layer.

Of course, the irony is that many of today’s “autonomous” machines still struggle with very simple things. Every time I see a robot hesitating in front of an obstacle that a human would casually step around, I’m reminded that intelligence may arrive slowly but the infrastructure that lets machines trust each other might arrive first.

$ROBO #BTC #ETH #Write2Earn #crypto