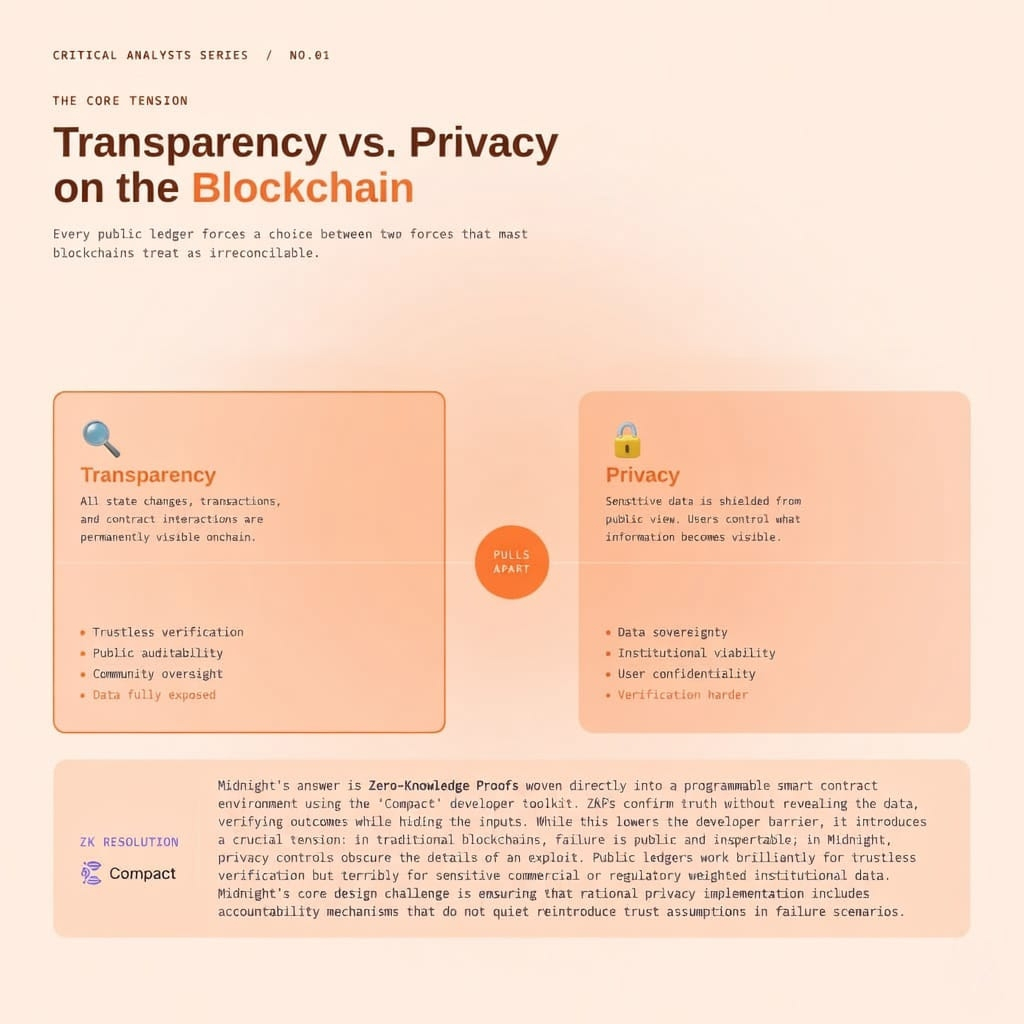

I really want to believe in what Midnight is building. I genuinely do. The problem they’ve identified is spot on, and anyone who has spent time thinking seriously about blockchain infrastructure knows that the "transparency-first" model has hit a wall. Public ledgers are brilliant for trustless verification, but they’re honestly terrible for anything involving sensitive commercial data, personal info, or institutional participation that carries heavy regulatory weight.

Midnight looks at that gap and offers something concrete: Zero-knowledge proofs woven directly into a programmable smart contract environment. With a familiar language for developers and privacy treated as architecture rather than an afterthought, the case for Midnight is incredibly coherent on paper.

But there is a tension sitting underneath all of this that I haven’t seen the project address with enough honesty yet. Privacy and verifiability aren't just technically opposing forces—they are socially opposing forces. The way Midnight navigates that opposition matters far more than any particular cryptographic implementation.

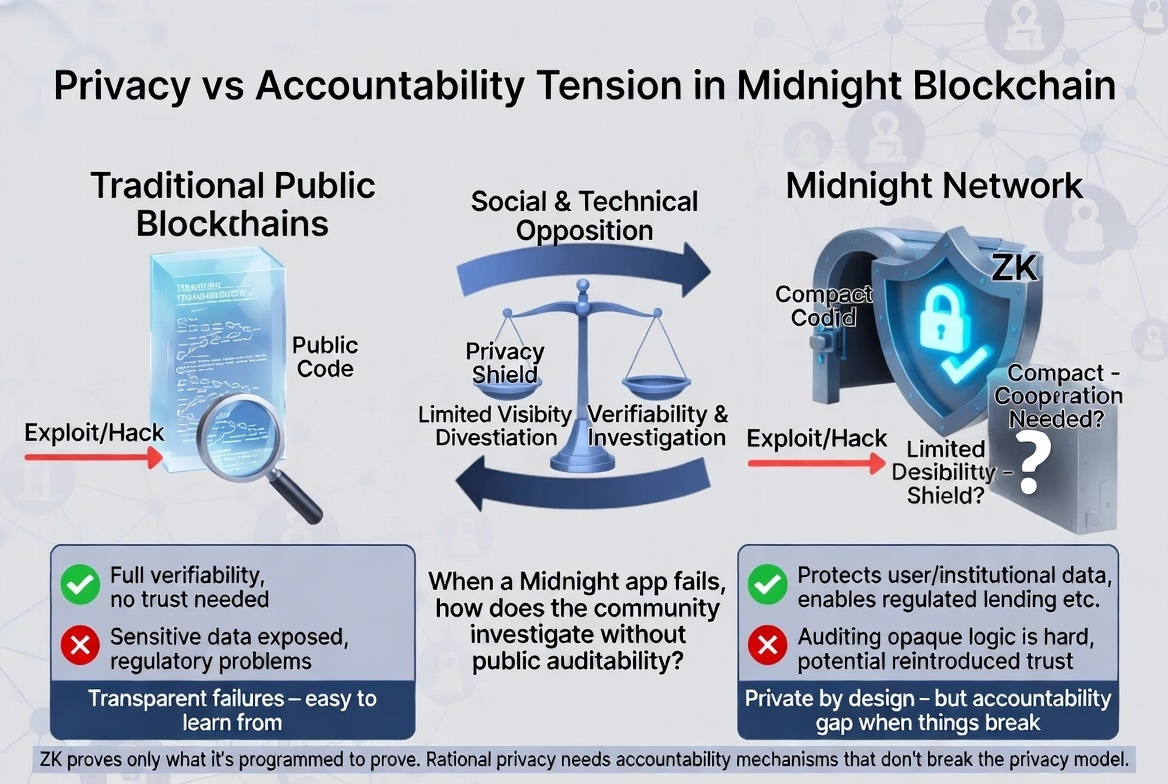

Imagine a lending protocol built on Midnight. A borrower can prove they meet collateral requirements without revealing their full balance sheet, and the lender gets confirmation without unnecessary exposure. The ZK proof does its job perfectly. But now, imagine that same protocol gets exploited. Maybe the proof logic has an edge case the devs didn’t anticipate, or the "Compact" contract has a subtle flaw that allows someone to game the system. Funds move, and something clearly went wrong.

Now, how does the community investigate what happened inside a system specifically designed to obscure those details?

Traditional blockchains are "ugly" when they fail, but they are transparent about how they fail. Every transaction and state change sits on a public ledger that independent analysts can examine. Exploits get reconstructed, postmortems get written, and the community learns because the evidence is visible. Midnight’s confidentiality controls, by design, limit that visibility. The very feature that protects user data in normal operation becomes a massive obstacle to accountability when something breaks.

The project would likely respond that ZK proofs provide the verification layer—that the network confirms validity without exposure. But that answer sidesteps the harder question: Proof systems only verify what they are programmed to verify. They don't catch what they weren't designed to check. When a contract behaves unexpectedly, the question isn't whether the proof verified correctly, but whether the contract logic was sound in the first place. Auditing logic you cannot fully inspect from the outside is a much harder problem than auditing a transparent contract.

While lowering the developer barrier with tools like Compact is useful, it’s a double-edged sword. Lower barriers mean more developers with varying levels of expertise writing privacy-heavy code that users will rely on. Accessible tooling combined with opaque execution is not an obviously safe combination.

Midnight positions "rational privacy" as the solution, but rational implementation requires accountability mechanisms that don’t conflict with the privacy model itself. The question I keep returning to is straightforward: When a Midnight-based application fails in a way that harms users, what does the investigation actually look like? If the answer depends heavily on developer cooperation rather than public auditability, has the network quietly reintroduced the trust assumptions it was supposed to eliminate?

$NIGHT #NIGHT @MidnightNetwork #MidnightNetwork #Privacy #BinanceSquare