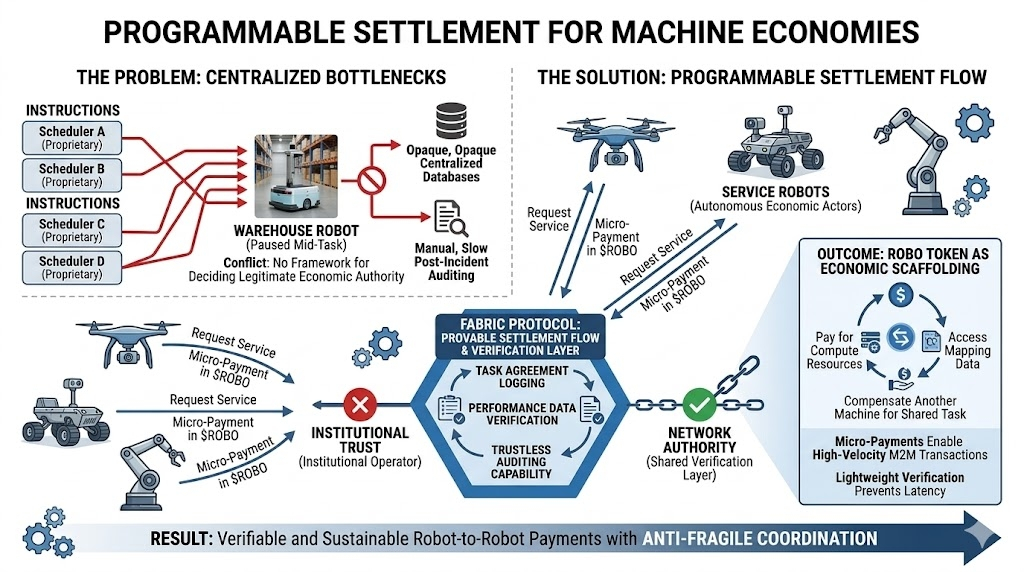

I remember reading a robotics incident report late one evening. A warehouse robot had paused mid task because two separate scheduling systems issued conflicting instructions. Nothing had technically failed. The robot simply had no framework for deciding which command carried legitimate authority.

That small moment stuck with me, because it exposed something deeper about robotics infrastructure.

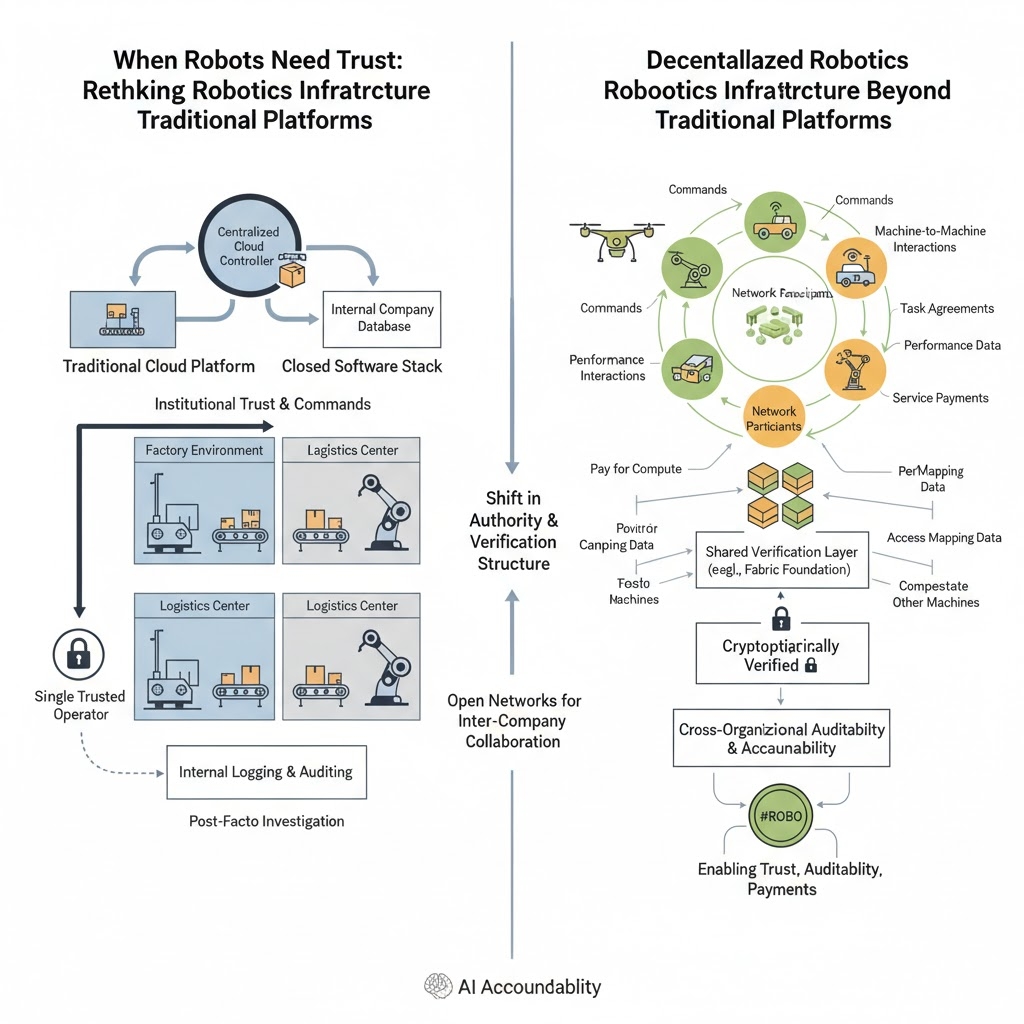

Traditional robotics platforms were never really designed for autonomous economic decision making. Most systems rely on centralized orchestration: a cloud controller, an internal company database, or a closed software stack deciding what machines should do next. It works well inside controlled environments like factories or logistics centers. But it assumes a single trusted operator.

The moment robots start operating across organizations, cities, or digital marketplaces, that assumption begins to break.

This is where projects experimenting with decentralized robotics infrastructure, like the ecosystem around @Fabric Foundation , start to look less like speculative crypto experiments and more like attempts to build trust layers for machines.

The core difference isn’t just blockchain versus non blockchain. It’s about how authority and verification are structured.

In traditional robotics systems, trust is institutional. If a robot receives instructions, the legitimacy of those commands comes from the operator running the platform. Logging and auditing exist, but they are internal records. If something goes wrong, investigation usually happens after the fact.

Decentralized robotics frameworks attempt to treat machines more like network participants. Commands, task agreements, and performance data can be recorded in a shared verification layer. The capacity for auditing activity spans beyond the log of just one operator.

That simple change is critical in regards to holding Artificial Intelligence accountable.

Think about a large number of delivery drones, warehouse robotics, and autonomous vehicles sharing their activities from company to company. Who validates the completion of individual tasks by which machines? Who validates that the robots successfully delivered a package or just state that they delivered product?

Traditional platforms handle this through internal APIs and private databases. A decentralized robotics protocol treats it as a coordination problem. Tasks, performance metrics, and service payments can be cryptographically verified rather than institutionally trusted.

The comparison between traditional and decentralized robotics resembles private intranets versus the open internet: closed systems excel internally, but open networks become essential as organizational boundaries disappear. The development of shared infrastructure for trust, auditability, and payments could be the critical scaffolding for future robotics collaboration.

#ROBO becomes less about speculation and more about enabling machine to machine economic interactions. Robots might pay for compute resources, access mapping data, or compensate another machine for completing part of a shared task.

It sounds futuristic, but the economic logic is surprisingly simple. Machines performing work may eventually need ways to prove, record, and settle that work autonomously.

Robotics systems already struggle with real world reliability. Adding cryptographic verification layers introduces new complexity. Robots operating in unpredictable environments need fast decision cycles, and blockchains are not exactly famous for millisecond latency.

So the real challenge for decentralized robotics platforms isn’t ideological—it’s architectural. Verification layers must remain lightweight enough that they don’t slow down the machines they’re supposed to coordinate.

But the concept continues to intrigue me.

Because when I compare traditional robotics platforms to emerging decentralized ones, the difference feels similar to the early internet versus private intranets. Closed systems work beautifully inside organizational walls. Open networks start to matter when those walls disappear.

If robots are going to collaborate across companies, cities, and digital markets, they may need shared infrastructure for trust, auditability, and payments.

And maybe that’s the quiet idea behind systems experimenting with Robo token , not building better robots, but building the institutional scaffolding that robots themselves might rely on.

Of course, this is all still theoretical. For now, most robots are still arguing with their own scheduling software in warehouses. Which, if I’m being honest, feels strangely relatable. Some days my calendar can’t agree with itself either.

$ROBO #BTC #ETH #Write2Earn #TRUMP $COS